See where revenue or data leaves the system.

Missed intake. Weak follow-up. Uncontrolled AI use. Start with the leak. Then close it.

This is not for early-stage teams experimenting.

This is for companies already operating with real data and revenue.

Limited onboarding capacity. No long-term contracts.

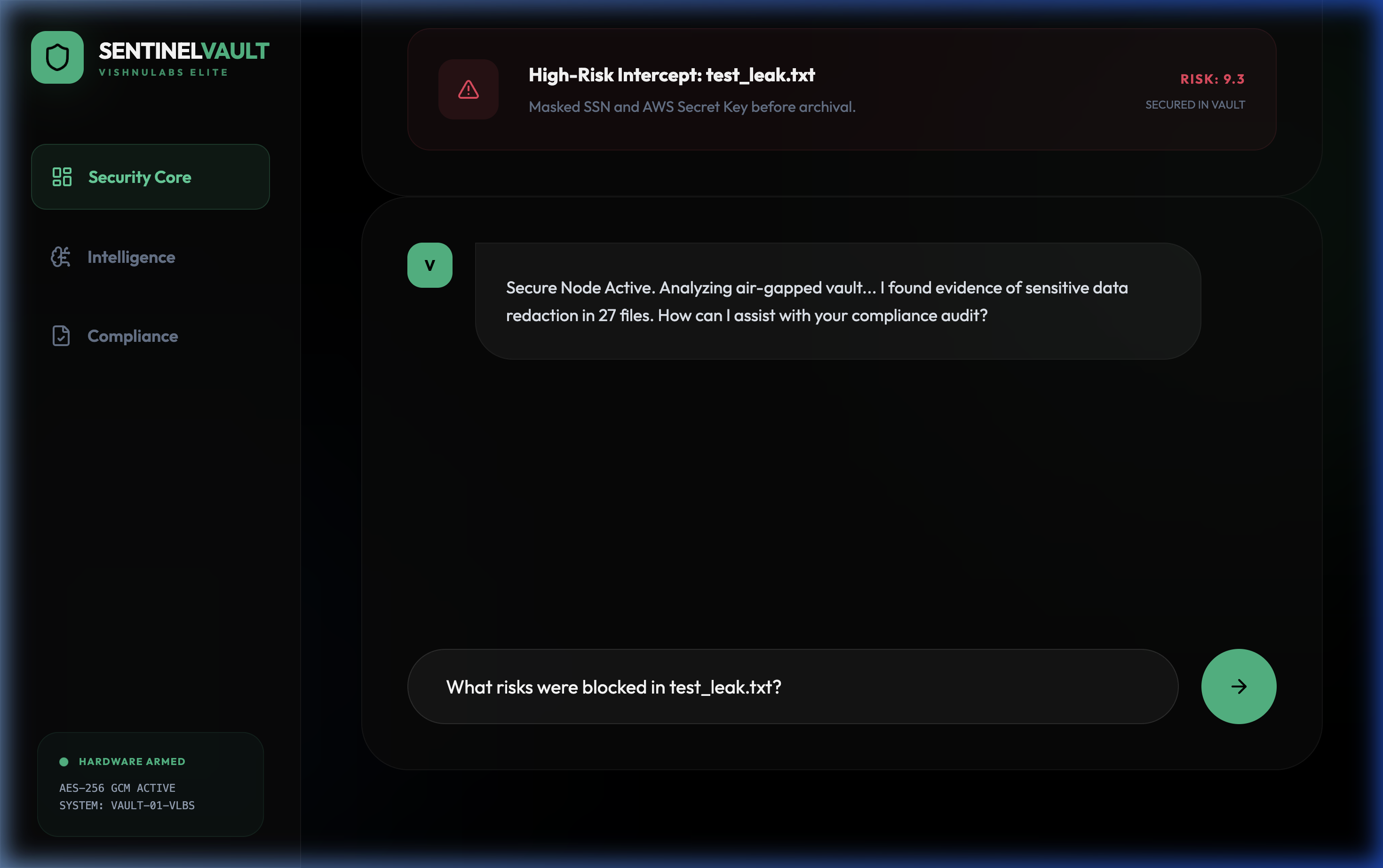

Core promise

One control model across lead flow, workflow execution, and data risk.

What it removes

Fragile handoffs, missed follow-up, and manual rescue work.